Pyspark Cheat Sheet Pdf - Note:in pyspark t is important to enclose every. With pyspark dataframe, how do you do the equivalent of pandas df['col'].unique(). Comparison operator in pyspark (not equal/ !=) asked 9 years, 1 month ago modified 1 year, 7 months ago viewed 164k times When in pyspark multiple conditions can be built using & (for and) and | (for or). 105 pyspark.sql.functions.when takes a boolean column as its condition. When using pyspark, it's often useful to think column expression when. I want to list out all the unique values in a pyspark dataframe.

I want to list out all the unique values in a pyspark dataframe. With pyspark dataframe, how do you do the equivalent of pandas df['col'].unique(). When using pyspark, it's often useful to think column expression when. 105 pyspark.sql.functions.when takes a boolean column as its condition. Comparison operator in pyspark (not equal/ !=) asked 9 years, 1 month ago modified 1 year, 7 months ago viewed 164k times Note:in pyspark t is important to enclose every. When in pyspark multiple conditions can be built using & (for and) and | (for or).

Comparison operator in pyspark (not equal/ !=) asked 9 years, 1 month ago modified 1 year, 7 months ago viewed 164k times With pyspark dataframe, how do you do the equivalent of pandas df['col'].unique(). I want to list out all the unique values in a pyspark dataframe. When in pyspark multiple conditions can be built using & (for and) and | (for or). Note:in pyspark t is important to enclose every. 105 pyspark.sql.functions.when takes a boolean column as its condition. When using pyspark, it's often useful to think column expression when.

PySpark Cheat Sheet SQL Basics and Working with Structured Data in

105 pyspark.sql.functions.when takes a boolean column as its condition. When in pyspark multiple conditions can be built using & (for and) and | (for or). Note:in pyspark t is important to enclose every. I want to list out all the unique values in a pyspark dataframe. Comparison operator in pyspark (not equal/ !=) asked 9 years, 1 month ago modified.

bigdatawithpyspark/cheatsheets/PySpark_Cheat_Sheet_Python.pdf at

When in pyspark multiple conditions can be built using & (for and) and | (for or). 105 pyspark.sql.functions.when takes a boolean column as its condition. When using pyspark, it's often useful to think column expression when. With pyspark dataframe, how do you do the equivalent of pandas df['col'].unique(). Note:in pyspark t is important to enclose every.

Resources/PySpark_SQL_Cheat_Sheet_Python.pdf at main · kiranvasadi

When in pyspark multiple conditions can be built using & (for and) and | (for or). Note:in pyspark t is important to enclose every. When using pyspark, it's often useful to think column expression when. Comparison operator in pyspark (not equal/ !=) asked 9 years, 1 month ago modified 1 year, 7 months ago viewed 164k times 105 pyspark.sql.functions.when takes.

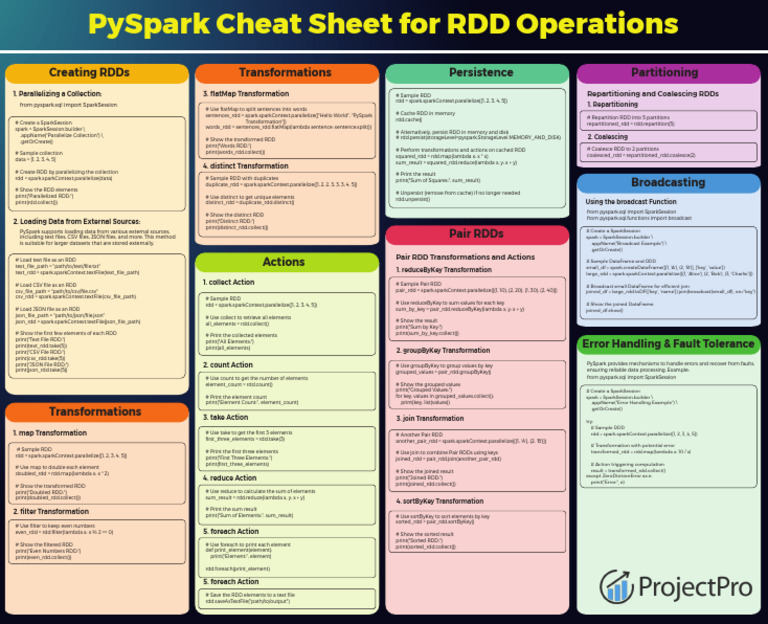

PySpark+Cheat+Sheet+for+RDD+Operations PDF Apache Spark Computer

With pyspark dataframe, how do you do the equivalent of pandas df['col'].unique(). 105 pyspark.sql.functions.when takes a boolean column as its condition. Note:in pyspark t is important to enclose every. When using pyspark, it's often useful to think column expression when. I want to list out all the unique values in a pyspark dataframe.

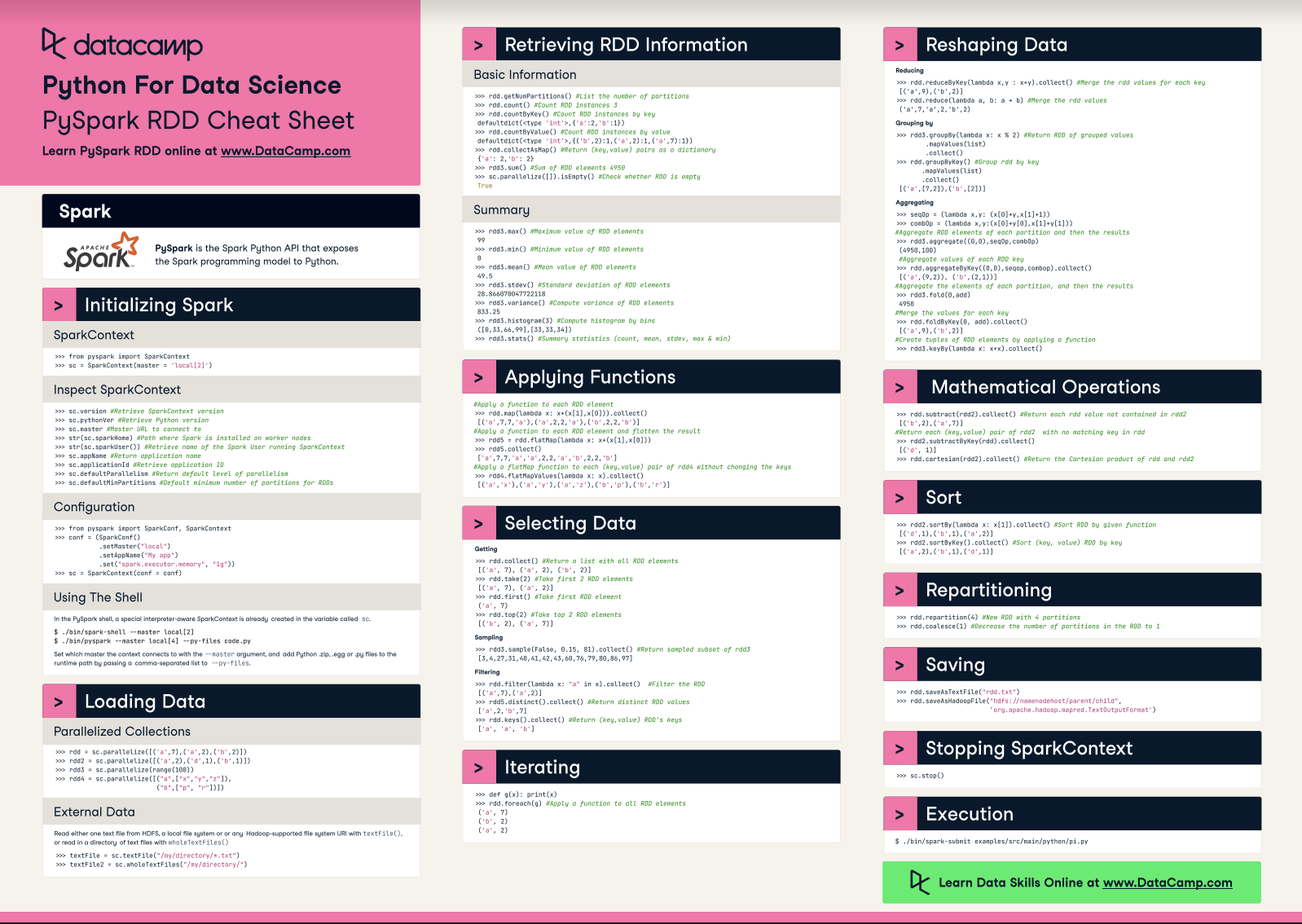

PySpark Cheat Sheet Spark in Python DataCamp

When in pyspark multiple conditions can be built using & (for and) and | (for or). I want to list out all the unique values in a pyspark dataframe. When using pyspark, it's often useful to think column expression when. Note:in pyspark t is important to enclose every. With pyspark dataframe, how do you do the equivalent of pandas df['col'].unique().

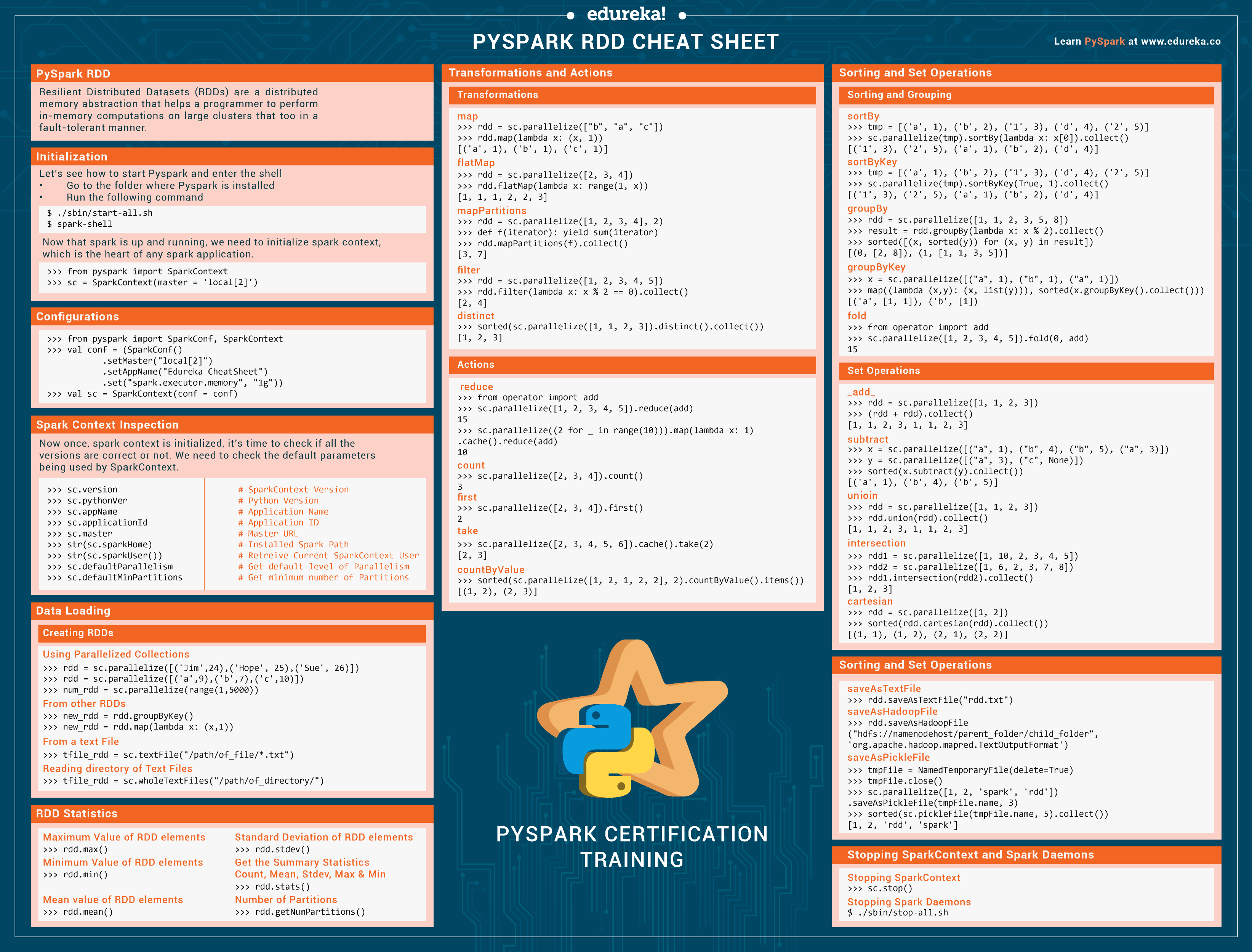

datasciencecheatsheets/PySparkcheatsheet.pdf at main

105 pyspark.sql.functions.when takes a boolean column as its condition. When in pyspark multiple conditions can be built using & (for and) and | (for or). I want to list out all the unique values in a pyspark dataframe. Note:in pyspark t is important to enclose every. When using pyspark, it's often useful to think column expression when.

Python Sheet Pyspark Cheat Sheet Python Rdd Spark Commands Cheatsheet

With pyspark dataframe, how do you do the equivalent of pandas df['col'].unique(). Comparison operator in pyspark (not equal/ !=) asked 9 years, 1 month ago modified 1 year, 7 months ago viewed 164k times When using pyspark, it's often useful to think column expression when. I want to list out all the unique values in a pyspark dataframe. 105 pyspark.sql.functions.when.

PySpark Cheat Sheet How to Create PySpark Cheat Sheet DataFrames?

With pyspark dataframe, how do you do the equivalent of pandas df['col'].unique(). Note:in pyspark t is important to enclose every. When in pyspark multiple conditions can be built using & (for and) and | (for or). When using pyspark, it's often useful to think column expression when. Comparison operator in pyspark (not equal/ !=) asked 9 years, 1 month ago.

Python Cheat Sheet Pdf Datacamp Cheat Sheet Images Hot Sex Picture

Note:in pyspark t is important to enclose every. 105 pyspark.sql.functions.when takes a boolean column as its condition. When using pyspark, it's often useful to think column expression when. Comparison operator in pyspark (not equal/ !=) asked 9 years, 1 month ago modified 1 year, 7 months ago viewed 164k times When in pyspark multiple conditions can be built using &.

Python Cheat Sheet Pdf Datacamp Cheat Sheet Images Ho vrogue.co

When using pyspark, it's often useful to think column expression when. Note:in pyspark t is important to enclose every. When in pyspark multiple conditions can be built using & (for and) and | (for or). Comparison operator in pyspark (not equal/ !=) asked 9 years, 1 month ago modified 1 year, 7 months ago viewed 164k times I want to.

When In Pyspark Multiple Conditions Can Be Built Using & (For And) And | (For Or).

Comparison operator in pyspark (not equal/ !=) asked 9 years, 1 month ago modified 1 year, 7 months ago viewed 164k times I want to list out all the unique values in a pyspark dataframe. When using pyspark, it's often useful to think column expression when. With pyspark dataframe, how do you do the equivalent of pandas df['col'].unique().

Note:in Pyspark T Is Important To Enclose Every.

105 pyspark.sql.functions.when takes a boolean column as its condition.